ICLV model questions

Posted: 03 May 2020, 22:04

Dear Sir or Madam,

Hello! I hope this message finds you well. I'm a current APOLLO user who is interested in running the ICLV model for my commuting mode choice data. I was successfully able to specify and run the ICLV model for my data, but I have some questions regarding the results:

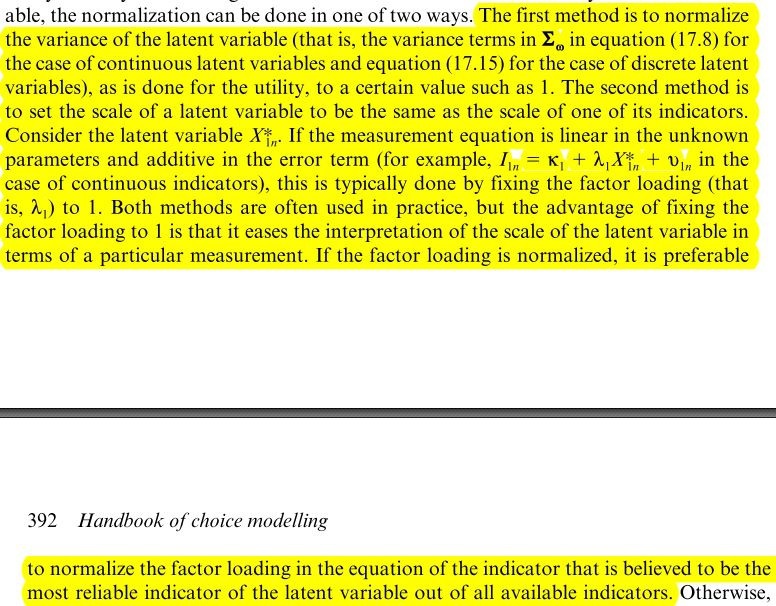

1. The attached IMAGE file (see the attached IMAGE file) shows the results of my latent variable model part of the ICLV. My question is: is there anyway to "standardized" these estimates in a similar way that I can do in LAVAAN R-package? (e.g., for "pro-car attitudes", I hope to make factor loading of "item 1" as 1.0, and other factor loadings accordingly.) In this regard, I'm also wondering why I get different results obtained from between the ICLV model and the traditional CFA (e.g., factor loadings).

2. I'm also wondering, in terms of the ICLV model, whether the APOLLO package provides any methods to calculate other model fit indices, such as McFadeen R-squared value or so.

Thanks in advance for your help.

Best,

Junghwan

*** The code attached below includes randCoeff part and Apollo_probabilities function.

========================

### Create random parameters

apollo_randCoeff=function(apollo_beta, apollo_inputs){

randcoeff = list()

randcoeff[["PROCAR"]] = CAR_gamma_female*female + CAR_gamma_inc*IncLOG + CAR_gamma_child*young_chil + CAR_gamma_age*age + CAR_gamma_nonwhite*nonwhite+ CAR_gamma_vehphh*vehphh+ CAR_gamma_parking*PARKING + PROCAR_eta

randcoeff[["ENVCON"]] = ENV_gamma_female*female + ENV_gamma_inc*IncLOG + ENV_gamma_child*young_chil + ENV_gamma_age*age + ENV_gamma_nonwhite*nonwhite+ ENV_gamma_vehphh*vehphh+ ENV_gamma_parking*PARKING + ENVCON_eta

randcoeff[["PROBUS"]] = BUS_gamma_female*female + BUS_gamma_inc*IncLOG + BUS_gamma_child*young_chil + BUS_gamma_age*age + BUS_gamma_nonwhite*nonwhite+ BUS_gamma_vehphh*vehphh+ BUS_gamma_parking*PARKING + PROBUS_eta

return(randcoeff)

}

# ################################################################# #

#### GROUP AND VALIDATE INPUTS ####

# ################################################################# #

apollo_inputs = apollo_validateInputs()

# ################################################################# #

#### DEFINE MODEL AND LIKELIHOOD FUNCTION ####

# ################################################################# #

apollo_probabilities=function(apollo_beta, apollo_inputs, functionality="estimate"){

### Attach inputs and detach after function exit

apollo_attach(apollo_beta, apollo_inputs)

on.exit(apollo_detach(apollo_beta, apollo_inputs))

### Create list of probabilities P

P = list()

ol_settings1 = list(outcomeOrdered=ITEM6,

V=CAR_zeta_i6*PROCAR,

tau=c(CAR_tau_i6_1, CAR_tau_i6_2, CAR_tau_i6_3, CAR_tau_i6_4),

rows=(task==1))

ol_settings2 = list(outcomeOrdered=ITEM17,

V=CAR_zeta_i17*PROCAR,

tau=c(CAR_tau_i17_1, CAR_tau_i17_2, CAR_tau_i17_3, CAR_tau_i17_4),

rows=(task==1))

ol_settings3 = list(outcomeOrdered=ITEM21,

V=CAR_zeta_i21*PROCAR,

tau=c(CAR_tau_i21_1, CAR_tau_i21_2, CAR_tau_i21_3, CAR_tau_i21_4),

rows=(task==1))

ol_settings4 = list(outcomeOrdered=ITEM25,

V=CAR_zeta_i25*PROCAR,

tau=c(CAR_tau_i25_1, CAR_tau_i25_2, CAR_tau_i25_3, CAR_tau_i25_4),

rows=(task==1))

ol_settings5 = list(outcomeOrdered=ITEM2,

V=ENV_zeta_i2*ENVCON,

tau=c(ENV_tau_i2_1, ENV_tau_i2_2, ENV_tau_i2_3, ENV_tau_i2_4),

rows=(task==1))

ol_settings6 = list(outcomeOrdered=ITEM9,

V=ENV_zeta_i9*ENVCON,

tau=c(ENV_tau_i9_1, ENV_tau_i9_2, ENV_tau_i9_3, ENV_tau_i9_4),

rows=(task==1))

ol_settings7 = list(outcomeOrdered=ITEM20,

V=ENV_zeta_i20*ENVCON,

tau=c(ENV_tau_i20_1, ENV_tau_i20_2, ENV_tau_i20_3, ENV_tau_i20_4),

rows=(task==1))

ol_settings8 = list(outcomeOrdered=ITEM8,

V=BUS_zeta_i8*PROBUS,

tau=c(BUS_tau_i8_1, BUS_tau_i8_2, BUS_tau_i8_3, BUS_tau_i8_4),

rows=(task==1))

ol_settings9 = list(outcomeOrdered=ITEM13,

V=BUS_zeta_i13*PROBUS,

tau=c(BUS_tau_i13_1, BUS_tau_i13_2, BUS_tau_i13_3, BUS_tau_i13_4),

rows=(task==1))

ol_settings10 = list(outcomeOrdered=ITEM18,

V=BUS_zeta_i18*PROBUS,

tau=c(BUS_tau_i18_1, BUS_tau_i18_2, BUS_tau_i18_3, BUS_tau_i18_4),

rows=(task==1))

ol_settings11 = list(outcomeOrdered=ITEM23, # 13 18 23

V=BUS_zeta_i23*PROBUS,

tau=c(BUS_tau_i23_1, BUS_tau_i23_2, BUS_tau_i23_3, BUS_tau_i23_4),

rows=(task==1))

P[["indic_PROCAR1"]] = apollo_ol(ol_settings1, functionality)

P[["indic_PROCAR2"]] = apollo_ol(ol_settings2, functionality)

P[["indic_PROCAR3"]] = apollo_ol(ol_settings3, functionality)

P[["indic_PROCAR4"]] = apollo_ol(ol_settings4, functionality)

P[["indic_ENVCON1"]] = apollo_ol(ol_settings5, functionality)

P[["indic_ENVCON2"]] = apollo_ol(ol_settings6, functionality)

P[["indic_ENVCON3"]] = apollo_ol(ol_settings7, functionality)

P[["indic_PROBUS1"]] = apollo_ol(ol_settings8, functionality)

P[["indic_PROBUS2"]] = apollo_ol(ol_settings9, functionality)

P[["indic_PROBUS3"]] = apollo_ol(ol_settings10, functionality)

P[["indic_PROBUS4"]] = apollo_ol(ol_settings11, functionality)

### Likelihood of choices

### List of utilities: these must use the same names as in mnl_settings, order is irrelevant

V = list()

V[['car']] = asc_car + b_tt * AUTO_TIME

V[['bus']] = asc_bus + b_tt * BUS_TIME + b_age_bus*age + b_female_bus*female + b_inc_bus*IncLOG + b_child_bus*young_chil + b_nonwhite_bus*nonwhite + b_vehicle_bus*vehphh + b_parking_bus*PARKING + CAR_lambda_bus * PROCAR + BUS_lambda_bus * PROBUS + ENV_lambda_bus * ENVCON

V[['bike']] = asc_bike + b_tt * BIKE_TIME + b_age_bike*age + b_female_bike*female + b_inc_bike*IncLOG + b_child_bike*young_chil + b_nonwhite_bike*nonwhite + b_vehicle_bike*vehphh + b_parking_bike*PARKING + CAR_lambda_bike * PROCAR + BUS_lambda_bike * PROBUS + ENV_lambda_bike * ENVCON

V[['walk']] = asc_walk + b_tt * WALK_TIME + b_age_walk*age + b_female_walk*female + b_inc_walk*IncLOG + b_child_walk*young_chil + b_nonwhite_walk*nonwhite + b_vehicle_walk*vehphh + b_parking_walk*PARKING + CAR_lambda_walk * PROCAR + BUS_lambda_walk * PROBUS + ENV_lambda_walk * ENVCON

### Define settings for MNL model component

mnl_settings = list(

alternatives = c(car=1, bus=2, bike=3, walk=4),

avail = 1,

choiceVar = O_Code,

V = V

)

### Compute probabilities for MNL model component

P[["choice"]] = apollo_mnl(mnl_settings, functionality)

### Likelihood of the whole model

P = apollo_combineModels(P, apollo_inputs, functionality)

### Average across inter-individual draws

P = apollo_avgInterDraws(P, apollo_inputs, functionality)

### Prepare and return outputs of function

P = apollo_prepareProb(P, apollo_inputs, functionality)

return(P)

}

Hello! I hope this message finds you well. I'm a current APOLLO user who is interested in running the ICLV model for my commuting mode choice data. I was successfully able to specify and run the ICLV model for my data, but I have some questions regarding the results:

1. The attached IMAGE file (see the attached IMAGE file) shows the results of my latent variable model part of the ICLV. My question is: is there anyway to "standardized" these estimates in a similar way that I can do in LAVAAN R-package? (e.g., for "pro-car attitudes", I hope to make factor loading of "item 1" as 1.0, and other factor loadings accordingly.) In this regard, I'm also wondering why I get different results obtained from between the ICLV model and the traditional CFA (e.g., factor loadings).

2. I'm also wondering, in terms of the ICLV model, whether the APOLLO package provides any methods to calculate other model fit indices, such as McFadeen R-squared value or so.

Thanks in advance for your help.

Best,

Junghwan

*** The code attached below includes randCoeff part and Apollo_probabilities function.

========================

### Create random parameters

apollo_randCoeff=function(apollo_beta, apollo_inputs){

randcoeff = list()

randcoeff[["PROCAR"]] = CAR_gamma_female*female + CAR_gamma_inc*IncLOG + CAR_gamma_child*young_chil + CAR_gamma_age*age + CAR_gamma_nonwhite*nonwhite+ CAR_gamma_vehphh*vehphh+ CAR_gamma_parking*PARKING + PROCAR_eta

randcoeff[["ENVCON"]] = ENV_gamma_female*female + ENV_gamma_inc*IncLOG + ENV_gamma_child*young_chil + ENV_gamma_age*age + ENV_gamma_nonwhite*nonwhite+ ENV_gamma_vehphh*vehphh+ ENV_gamma_parking*PARKING + ENVCON_eta

randcoeff[["PROBUS"]] = BUS_gamma_female*female + BUS_gamma_inc*IncLOG + BUS_gamma_child*young_chil + BUS_gamma_age*age + BUS_gamma_nonwhite*nonwhite+ BUS_gamma_vehphh*vehphh+ BUS_gamma_parking*PARKING + PROBUS_eta

return(randcoeff)

}

# ################################################################# #

#### GROUP AND VALIDATE INPUTS ####

# ################################################################# #

apollo_inputs = apollo_validateInputs()

# ################################################################# #

#### DEFINE MODEL AND LIKELIHOOD FUNCTION ####

# ################################################################# #

apollo_probabilities=function(apollo_beta, apollo_inputs, functionality="estimate"){

### Attach inputs and detach after function exit

apollo_attach(apollo_beta, apollo_inputs)

on.exit(apollo_detach(apollo_beta, apollo_inputs))

### Create list of probabilities P

P = list()

ol_settings1 = list(outcomeOrdered=ITEM6,

V=CAR_zeta_i6*PROCAR,

tau=c(CAR_tau_i6_1, CAR_tau_i6_2, CAR_tau_i6_3, CAR_tau_i6_4),

rows=(task==1))

ol_settings2 = list(outcomeOrdered=ITEM17,

V=CAR_zeta_i17*PROCAR,

tau=c(CAR_tau_i17_1, CAR_tau_i17_2, CAR_tau_i17_3, CAR_tau_i17_4),

rows=(task==1))

ol_settings3 = list(outcomeOrdered=ITEM21,

V=CAR_zeta_i21*PROCAR,

tau=c(CAR_tau_i21_1, CAR_tau_i21_2, CAR_tau_i21_3, CAR_tau_i21_4),

rows=(task==1))

ol_settings4 = list(outcomeOrdered=ITEM25,

V=CAR_zeta_i25*PROCAR,

tau=c(CAR_tau_i25_1, CAR_tau_i25_2, CAR_tau_i25_3, CAR_tau_i25_4),

rows=(task==1))

ol_settings5 = list(outcomeOrdered=ITEM2,

V=ENV_zeta_i2*ENVCON,

tau=c(ENV_tau_i2_1, ENV_tau_i2_2, ENV_tau_i2_3, ENV_tau_i2_4),

rows=(task==1))

ol_settings6 = list(outcomeOrdered=ITEM9,

V=ENV_zeta_i9*ENVCON,

tau=c(ENV_tau_i9_1, ENV_tau_i9_2, ENV_tau_i9_3, ENV_tau_i9_4),

rows=(task==1))

ol_settings7 = list(outcomeOrdered=ITEM20,

V=ENV_zeta_i20*ENVCON,

tau=c(ENV_tau_i20_1, ENV_tau_i20_2, ENV_tau_i20_3, ENV_tau_i20_4),

rows=(task==1))

ol_settings8 = list(outcomeOrdered=ITEM8,

V=BUS_zeta_i8*PROBUS,

tau=c(BUS_tau_i8_1, BUS_tau_i8_2, BUS_tau_i8_3, BUS_tau_i8_4),

rows=(task==1))

ol_settings9 = list(outcomeOrdered=ITEM13,

V=BUS_zeta_i13*PROBUS,

tau=c(BUS_tau_i13_1, BUS_tau_i13_2, BUS_tau_i13_3, BUS_tau_i13_4),

rows=(task==1))

ol_settings10 = list(outcomeOrdered=ITEM18,

V=BUS_zeta_i18*PROBUS,

tau=c(BUS_tau_i18_1, BUS_tau_i18_2, BUS_tau_i18_3, BUS_tau_i18_4),

rows=(task==1))

ol_settings11 = list(outcomeOrdered=ITEM23, # 13 18 23

V=BUS_zeta_i23*PROBUS,

tau=c(BUS_tau_i23_1, BUS_tau_i23_2, BUS_tau_i23_3, BUS_tau_i23_4),

rows=(task==1))

P[["indic_PROCAR1"]] = apollo_ol(ol_settings1, functionality)

P[["indic_PROCAR2"]] = apollo_ol(ol_settings2, functionality)

P[["indic_PROCAR3"]] = apollo_ol(ol_settings3, functionality)

P[["indic_PROCAR4"]] = apollo_ol(ol_settings4, functionality)

P[["indic_ENVCON1"]] = apollo_ol(ol_settings5, functionality)

P[["indic_ENVCON2"]] = apollo_ol(ol_settings6, functionality)

P[["indic_ENVCON3"]] = apollo_ol(ol_settings7, functionality)

P[["indic_PROBUS1"]] = apollo_ol(ol_settings8, functionality)

P[["indic_PROBUS2"]] = apollo_ol(ol_settings9, functionality)

P[["indic_PROBUS3"]] = apollo_ol(ol_settings10, functionality)

P[["indic_PROBUS4"]] = apollo_ol(ol_settings11, functionality)

### Likelihood of choices

### List of utilities: these must use the same names as in mnl_settings, order is irrelevant

V = list()

V[['car']] = asc_car + b_tt * AUTO_TIME

V[['bus']] = asc_bus + b_tt * BUS_TIME + b_age_bus*age + b_female_bus*female + b_inc_bus*IncLOG + b_child_bus*young_chil + b_nonwhite_bus*nonwhite + b_vehicle_bus*vehphh + b_parking_bus*PARKING + CAR_lambda_bus * PROCAR + BUS_lambda_bus * PROBUS + ENV_lambda_bus * ENVCON

V[['bike']] = asc_bike + b_tt * BIKE_TIME + b_age_bike*age + b_female_bike*female + b_inc_bike*IncLOG + b_child_bike*young_chil + b_nonwhite_bike*nonwhite + b_vehicle_bike*vehphh + b_parking_bike*PARKING + CAR_lambda_bike * PROCAR + BUS_lambda_bike * PROBUS + ENV_lambda_bike * ENVCON

V[['walk']] = asc_walk + b_tt * WALK_TIME + b_age_walk*age + b_female_walk*female + b_inc_walk*IncLOG + b_child_walk*young_chil + b_nonwhite_walk*nonwhite + b_vehicle_walk*vehphh + b_parking_walk*PARKING + CAR_lambda_walk * PROCAR + BUS_lambda_walk * PROBUS + ENV_lambda_walk * ENVCON

### Define settings for MNL model component

mnl_settings = list(

alternatives = c(car=1, bus=2, bike=3, walk=4),

avail = 1,

choiceVar = O_Code,

V = V

)

### Compute probabilities for MNL model component

P[["choice"]] = apollo_mnl(mnl_settings, functionality)

### Likelihood of the whole model

P = apollo_combineModels(P, apollo_inputs, functionality)

### Average across inter-individual draws

P = apollo_avgInterDraws(P, apollo_inputs, functionality)

### Prepare and return outputs of function

P = apollo_prepareProb(P, apollo_inputs, functionality)

return(P)

}